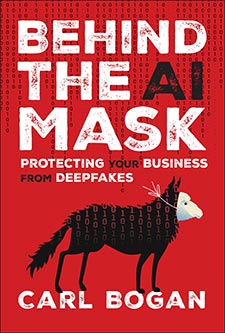

Deepfake Crime is Exploding—Here’s What Every Entrepreneur Must Know to Protect Their Empire Before It’s Too Late

Imagine sitting down for what you think is just another routine video call, only to find out later that the familiar face and voice you trusted were nothing but a digital smoke-and-mirrors trick—with $25 million gone in a blink. That’s the stark reality Carl Bogan explores in his eye-opening new book, Behind The AI Mask: Protecting Your Business From Deepfakes. It’s not just about slick tech; it’s about how our very instincts and trust are being weaponized against us. When deepfakes get close enough—not perfect, just close enough—they slip right past skepticism, leaving businesses and individuals exposed to unimaginable risks. So, here’s a question to stir your mind: in an age where your CFO’s “live video call” might be a cleverly crafted illusion, how do you know who to trust? Pull up a chair. Let’s dive into why seeing and hearing no longer guarantees authenticity—and what that means for your business’s future security.

In his new book Behind The AI Mask: Protecting Your Business From Deepfakes, author Carl Bogan brings his expertise to bear on the new cyber threat facing companies around the world

A finance worker in Hong Kong joined what seemed like an ordinary video call. His company’s Chief Financial Officer appeared on screen, flanked by other senior executives, issuing instructions to transfer urgent funds. The CFO’s face moved naturally. His voice carried the same calm authority it always had. The instructions were clear. The urgency was real.

Trusting the process, the employee authorised the wire transfer.

By the time anyone realised what had happened, $25 million had vanished.

The CFO and the executives had never been on the call. The entire meeting had been an illusion, orchestrated by criminals using deepfake technology to mimic the company’s leadership in real time.

There were no obvious glitches, no distorted audio, no mismatched lip-syncing. The deception was precise where it mattered.

The finance worker wasn’t fooled by a poorly written phishing email or an obviously suspicious message. He had been deceived by something far more sophisticated, an attack that felt real in every way that counted.

Deepfake scams succeed through their ability to exploit expectations rather than through perfection.

The finance worker knew the CFO’s face. He had heard his voice on countless other calls.

Every detail was familiar enough to bypass the moment where doubt might have surfaced.

Even if the deepfake was not flawless, his brain had already filled in the gaps because there was no reason to question something he had always trusted.

His morning had started like any other. He reviewed his inbox, sipped his coffee and prepared for the scheduled call.

When the CFO appeared on screen, the finance worker barely thought twice. The lighting looked right. The background was the same office setting he was used to seeing.

His colleagues nodded along, responding as they normally would. Everything about the scene was mundane, expected, ordinary. But that was what made it dangerous.

There was no reason to be suspicious because nothing felt out of place.

At the end of the investigation, no arrests were made and no funds were recovered.

In hindsight, there were details in this video that might have seemed unusual — perhaps a slightly longer delay before responses, or an almost imperceptible stiffness in the executive’s movements — but those microsecond hesitations never surfaced in the finance worker’s conscious mind.

The scam worked because it fit perfectly into the pattern of what he had come to expect. The deepfake didn’t need to be flawless. It just needed to be close enough.

This scam highlights an uncomfortable truth. We are in an era where seeing and hearing no longer equate to believing.

Traditional markers of authenticity, such as facial recognition, voice verification and live video calls, are now vulnerabilities rather than safeguards.

The psychological shortcuts that allow us to navigate daily interactions efficiently, the trust we place in familiar faces and voices, have become liabilities.

Deepfakes exploit these instincts, allowing criminals to override scepticism with a seamless illusion of reality.

This represents a fundamental crisis in how we verify reality. For centuries, our ability to trust what we see and hear has shaped decision-making in critical areas such as business, law and personal relationships.

In courtrooms, video and audio evidence have often been regarded as irrefutable proof, determining the outcomes of high-stakes trials.

But what happens when those windows can be forged as easily as a fake signature?

A video confession, once seen as the irrefutable gold standard of credibility, can now be challenged as an elaborate fabrication.

A damning phone call may now become suspect, not because it lacks clarity, but because it is too perfect, too convenient, and too easy to replicate in the digital age.

Judges and juries are not forensic analysts. They rely on a bedrock assumption: that what they see and hear in evidence is an authentic record of the truth.

Deepfakes absolutely break that assumption. They turn every piece of evidence into a potential trick, every voice into a mask.

In this new environment, prosecutors find themselves forced to defend not just the narrative of a case, but the very reality of the evidence that supports it.

Defence attorneys, in turn, gain a powerful new tactic: the ability to sow doubt not by proving an alibi, but simply by raising the possibility of a digital forgery.

This shift not only changes the rules of the courtroom but also reshapes the stakes. For centuries, justice has depended on the idea that you can test the credibility of a witness: that you can trust the camera, the tape recorder, the archived voicemail.

Deepfakes collapse that distinction. They turn even the most straightforward pieces of evidence into a battleground of verification and uncertainty.

In corporate settings, face-to-face interactions and voice verification have long been relied on to establish credibility and authorise major transactions.

In daily life, people lean on these familiar voices and faces as primary indicators of what’s real. This deep pattern of trust, once a strength, has become a weak point that deepfake technology exploits.

When you can generate convincing lies on demand, the boundary between the genuine and the synthetic fades. Individuals and organisations are left navigating a world where the rules for recognising authenticity no longer hold steady.

They face not just confusion, but a profound risk of manipulation and exploitation of decisions made in good faith turning against them, of relationships and reputations becoming collateral in someone else’s scheme.

It’s a landscape where illusions can be made to look as real as the truth itself, and the consequences can be permanent.

With everything becoming so digital, will we be forced to return to in-person meetings?

Will people start travelling several times a week for face-to-face interactions simply because they can no longer trust digital representations?

This is more than a question of convenience; it’s a question of security — of being able to look someone in the eye and know they’re real.

This is an edited extract from Behind the AI Mask: Protecting Your Business From Deepfakes by Carl Bogan (published by Wiley)

Carl Bogan pioneered viral deepfake technology as creator of Myster Giraffe, generating hundreds of millions of views across social media. With over 20 years in visual effects for Disney, Paramount, Apple, Google and Sony, he brings a unique perspective to deepfake defence — understanding how these illusions are crafted from the artist’s side, not the IT department. He has taught workshops internationally, including at Cisco, helping organisations see deepfake threats through the eyes of the creators who build them.

Post Comment