Google Drops Gemini 3.1 Flash Lite: The Game-Changer That’s Shaking Up AI—Fastest and Cheapest Yet, But What’s the Real Impact?

Ever wonder if speed and savings in AI could bump up your business game without breaking the bank? Well, Google just tossed a fresh contender into the ring with Gemini 3.1 Flash Lite — a sleek, fast, and budget-friendly AI model that’s engineered to handle hefty workloads without making you sweat over costs or lag times. This little powerhouse is debuting in preview via the Gemini API on Google AI Studio and is already turning heads on Vertex AI for enterprise use. What’s truly exciting is the balance it strikes: lightning-fast response times paired with one of the lowest price points out there — starting at just twenty-five cents per million input tokens. For those of us who live and breathe efficiency and ROI, this could be a game changer, especially when flexibility in processing complex tasks matters as much as sheer speed. Curious how this new player stacks up in real-world tests? And how you might leverage its adjustable thinking levels to tailor AI performance for your own project’s needs? Yeah, I’m thinking this could be a winning move for developers and businesses alike. LEARN MORE

Google today introduced Gemini 3.1 Flash Lite, a new artificial intelligence model designed to deliver faster responses and lower operating costs within the company’s Gemini 3 model family.

The model is rolling out in preview to developers through the Gemini API in Google AI Studio and to enterprise customers through Vertex AI.

Google described Gemini 3.1 Flash Lite as the fastest and most cost-efficient model in the Gemini 3 series, built specifically for high-volume workloads where latency and cost are critical.

Pricing for the model starts at $0.25 per million input tokens and $1.50 per million output tokens, positioning it as one of the lowest cost options in Google’s current AI model lineup.

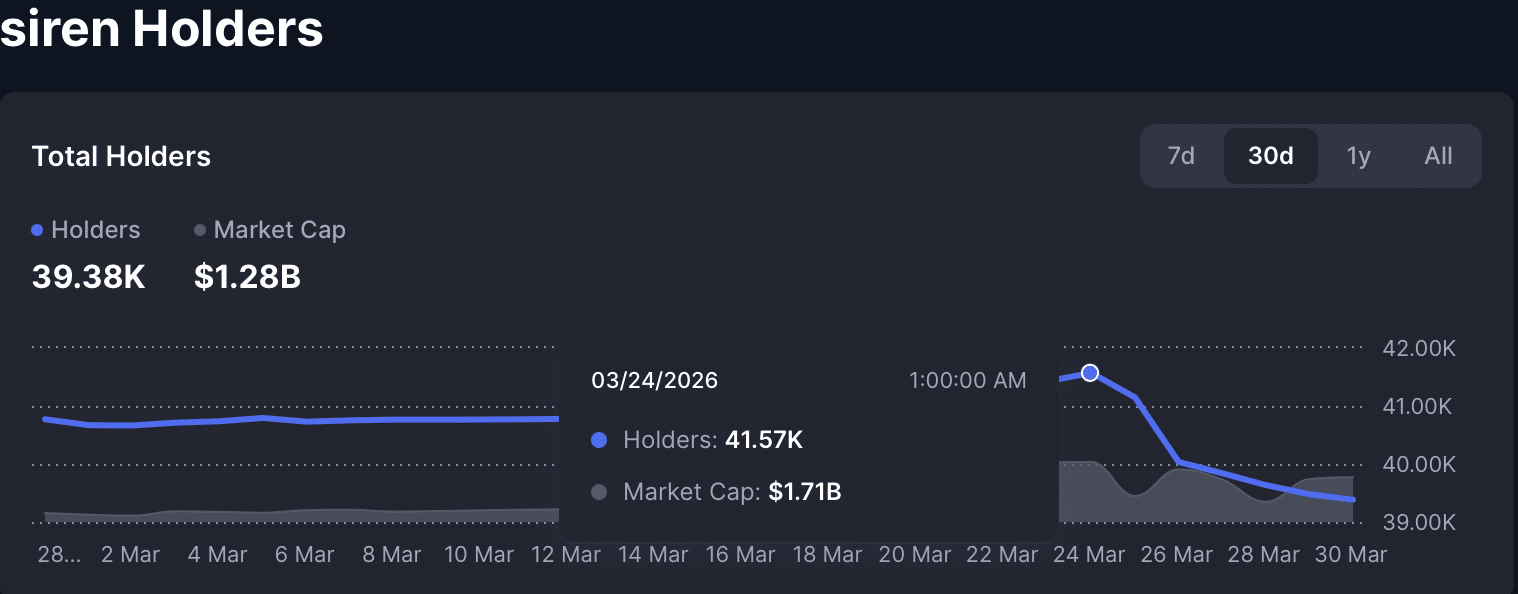

According to benchmarks cited by Google, Gemini 3.1 Flash Lite delivers a 2.5 times faster time to first answer token compared with Gemini 2.5 Flash and produces output 45 percent faster while maintaining similar or better quality.

Performance benchmarks also place the model competitively against other lightweight AI models. Gemini 3.1 Flash Lite achieved an Elo score of 1432 on the Arena AI leaderboard and recorded 86.9 percent on the GPQA Diamond reasoning benchmark and 76.8 percent on the MMMU Pro multimodal benchmark.

Google said the model is designed to handle high-frequency developer tasks such as translation, content moderation and large-scale instruction following, while still supporting more complex workloads like interface generation, simulation creation and structured data tasks.

The release also introduces adjustable thinking levels within AI Studio and Vertex AI, allowing developers to control how much reasoning the model performs depending on the complexity of a task. This flexibility is intended to help teams balance cost, speed and accuracy when deploying AI applications at scale.

Post Comment